ASPI has recently observed a coordinated inauthentic influence campaign originating on YouTube that’s promoting pro-China and anti-US narratives in an apparent effort to shift English-speaking audiences’ views of those countries’ roles in international politics, the global economy and strategic technology competition. We have published details of this campaign in a new ASPI report.

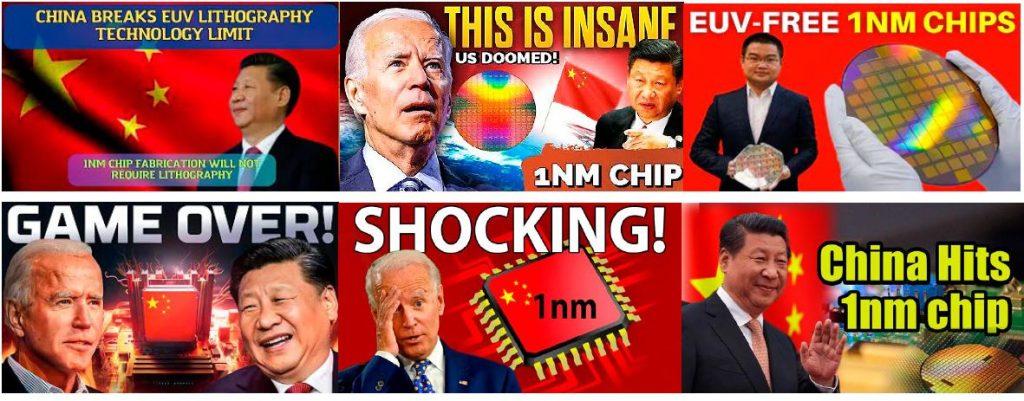

This new campaign—which ASPI has named ‘Shadow Play’—has attracted an unusually large audience and is using entities and voiceovers generated by artificial intelligence to broaden its reach and scale. The narratives it promotes include China’s efforts to ‘win’ the US–China technology war amid US sanctions targeting China. It also has a focus on Chinese and US companies, such as pro-Huawei and anti-Apple content.

The Shadow Play campaign involves a network of at least 30 YouTube channels that have produced more than 4,500 videos. Those channels have so far attracted just under 120 million views and 730,000 subscribers. The accounts began publishing content in around mid-2022. The campaign’s ability to amass and access such a large global audience—and its potential to covertly influence public opinion on these topics—should be cause for concern.

ASPI reported our findings to YouTube/Google on 7 December for comment. By 8 December, they had taken down 19 YouTube channels from the Shadow Play network—10 for coordinated inauthentic behaviour and nine for spam. These channels now display a range of messages from YouTube indicating why they were taken down. For example, one was ‘terminated for violating YouTube’s community guidelines’, while another was ‘terminated due to multiple or severe violations of YouTube’s policy for spam, deceptive practices and misleading content or other Terms of Service violations’.

ASPI also reported our findings to British artificial intelligence company Synthesia, whose AI avatars were used by the network. On 14 December, Synthesia disabled the Synthesia account used by one of the YouTube accounts for violating its news media reporting policy.

We believe it’s likely that this new campaign is operated by a Mandarin-speaking actor. Indicators of this actor’s behaviour don’t closely map to the behaviour of any known state actor that conducts online influence operations. Our preliminary analysis is that it could be a commercial actor operating under some degree of state direction, funding or encouragement. This could suggest that some patriotic companies increasingly operate China-linked campaigns alongside government actors.

The campaign focuses on promoting six narratives. Two of the most dominant are that China is ‘winning’ in crucial areas of global competition—first, in the ‘US–China tech war’ and second, in the competition for rare earths and critical minerals. Other key narratives are that the US is headed for collapse and its alliance partnerships are fracturing; that China and Russia are responsible, capable players in geopolitics; that the US dollar and the US economy are weak; and that China is highly capable and trusted to deliver massive infrastructure projects.

The montage below shows examples of the style of content generated by the network, which used multiple YouTube channels to publish videos alleging that China had produced a 1-nanometre chip without using a lithography machine.

This campaign is unique in three ways. First, there’s the broadening of topics; previous China-linked campaigns have been tightly targeted and often focused on a narrow set of topics. For example, the campaign’s narrative that China is technologically superior to the US is presented through detailed arguments on technology topics including semiconductors, rare earths, electric vehicles and infrastructure projects. In addition, it targets, via criticism and disinformation, US technology firms such as Apple and Intel.

Chinese state media outlets, Chinese officials and online influencers sometimes publish on these topics in an effort to ‘tell China’s story well’ (讲好中国故事). A few Chinese state-backed inauthentic information operations have touched on rare earths and semiconductors, but never in depth or by combining multiple narratives in one campaign package. The broader set of topics and opinions in this campaign may demonstrate greater alignment with the known behaviour of Russia-linked threat actors.

Second, the campaign’s leveraging of AI points to a change in techniques and tradecraft. To our knowledge, this is one of the first times that video essays, together with generative AI voiceovers, have been used as a tactic in an influence operation. Video essays are a popular style of medium-length YouTube video in which a narrator makes an argument through a voiceover, while content to support their argument is displayed on the screen.

This shows a continuation of a trend that threat actors are increasingly moving towards: using off-the-shelf video-editing and generative AI tools to produce convincing, persuasive content at scale that can build an audience on social-media services.

We also observed one account in the YouTube network using an avatar created by Sogou, one of China’s largest technology companies. We believe this is the first instance of a Chinese company’s AI-generated avatar being used in an influence operation.

Third, unlike previous China-focused campaigns, this one has attracted large numbers of views and subscribers. It has also been monetised, although only through limited means. For example, one channel accepted money from US and Canadian companies to support production of its videos. The substantial number of views and subscribers suggest that the campaign is one of the most successful influence operations related to China ever witnessed on social media.

Many China-linked influence operations, such as Dragonbridge (also known as ‘Spamouflage’ in the research community), have succeeded in attracting initial engagement but failed to draw a large audience on social media. However, further research by YouTube is needed to determine whether view counts and subscriber counts demonstrated real viewership, were artificially manipulated, or both.

In our examination of comments on videos in this campaign, we saw signs of a genuine audience. ASPI believes that this campaign is probably larger than the 30 channels covered in the report, but we constrained our initial examination to channels we saw as core to the campaign. We also believe there to be more channels publishing content in non-English languages; for example, we saw channels publishing in Bahasa Indonesia that aren’t included in the report.

That’s not to say that the effectiveness of influence operations should be measured only through engagement numbers. As ASPI has previously demonstrated, Chinese Communist Party influence operations that troll, threaten and harass on social media seek to silence and cause psychological harm to those being targeted, rather than seeking engagement. Similarly, influence operations can be used to ‘poison the well’ by crowding out the content of genuine actors in online spaces, or to poison datasets used for AI products, such as large-language models.

The report also discusses another way that an influence operation can be effective: through its ability to spill over and gain traction in a wider system of misinformation. We found that at least one narrative from the Shadow Play network—that Iran had switched on its China-provided BeiDou satellite system—began to gain traction on X and other social-media platforms within a few hours of its posting on YouTube.

To fight against influence operations on social media, the report recommends that trust and safety teams at social-media companies, analysts in government and the open-source-intelligence research community immediately investigate this ongoing information operation, including operator intent and the scale and scope of YouTube channels involved.

We recommend that social-media and technology companies require users to disclose when generative AI is used in audio, video and image content and institute an explicit ban in their terms of service on the use of their content or platforms in influence operations.

We also recommend broader efforts by the Five Eyes countries and allied partners to declassify open-source social-media-based influence operations and share information with like-minded nations, relevant non-government organisations and the private sector, as well as consideration of whether national intelligence-collection priorities support the effective amalgamation of information on Russia-, China- and Iran-linked information operations.